Motivation

Chronic wound care remains a major healthcare challenge, requiring regular assessments that are costly and often delayed.

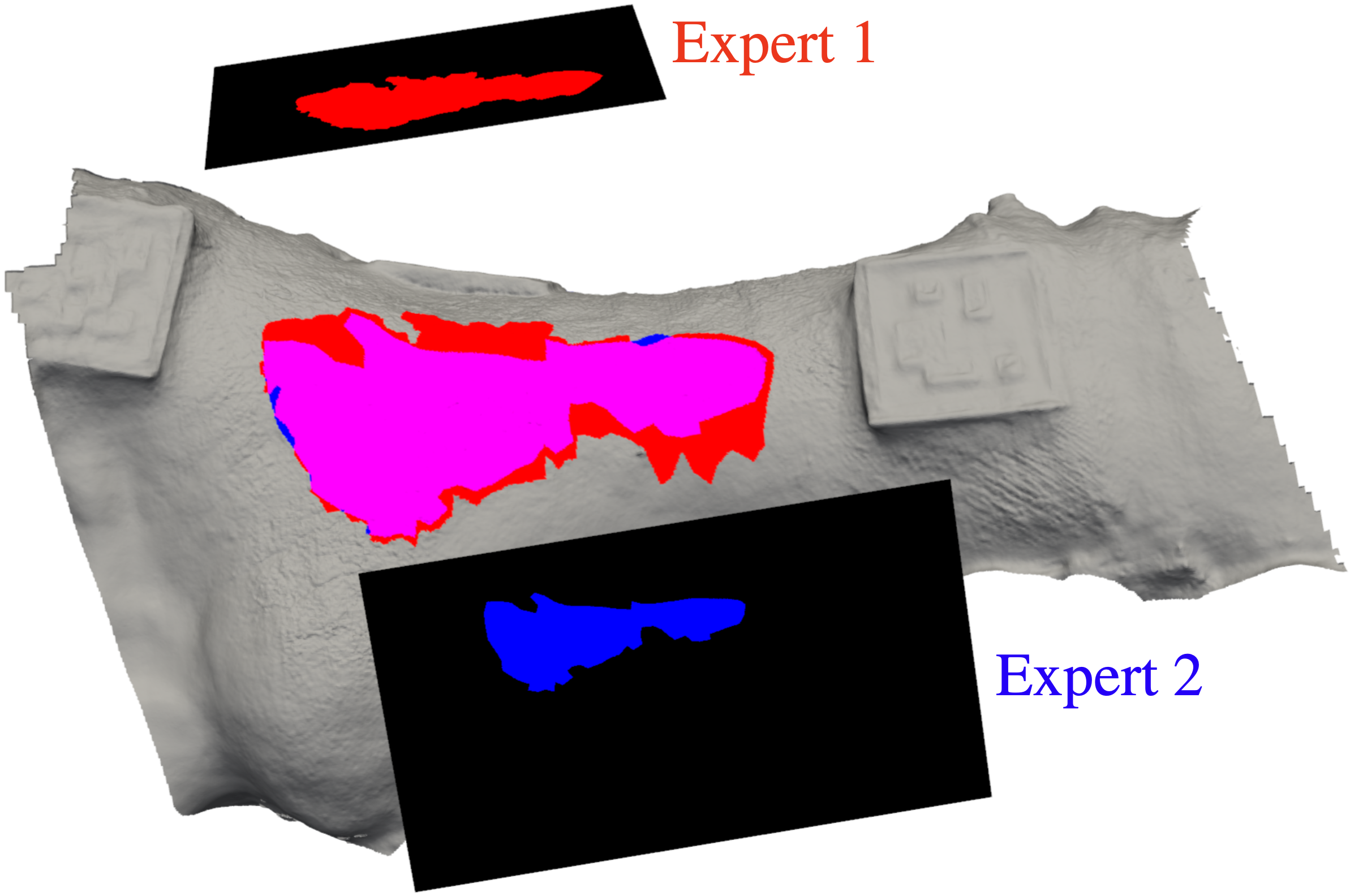

Most existing automated wound segmentation methods operate in 2D, leading to inconsistent results across viewpoints and inaccurate 3D measurements.

WoundNeRF addresses this by learning a 3D‑consistent wound segmentation field directly from multi‑view images.

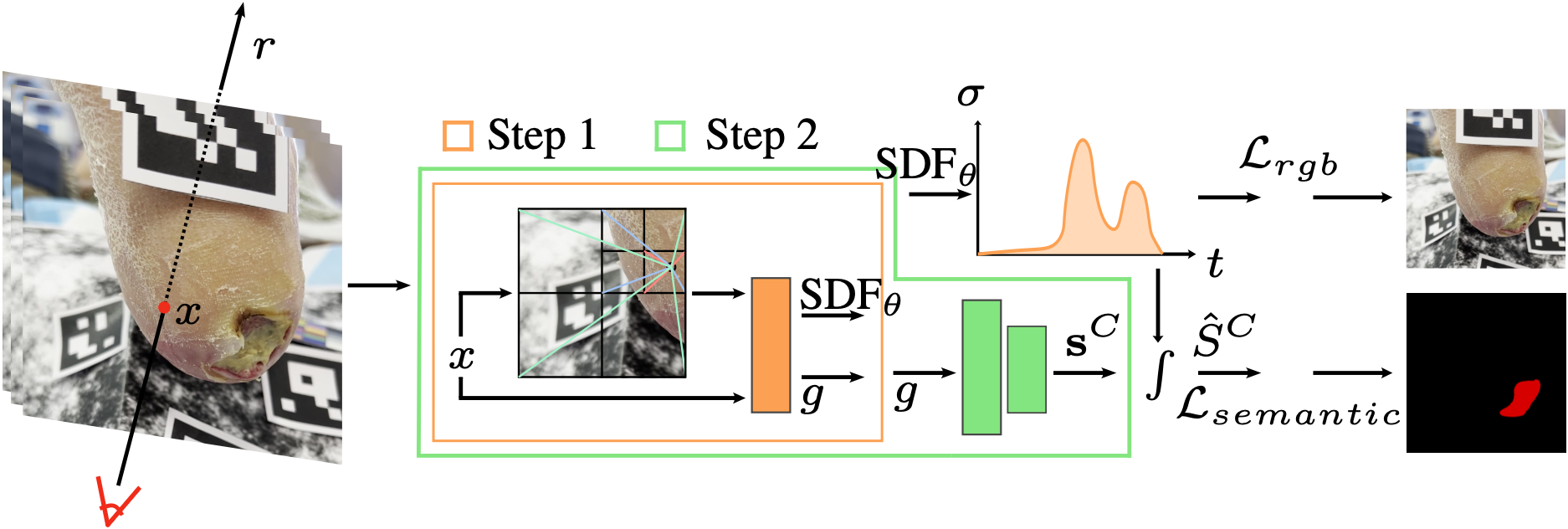

Approach

Building on advances in Neural Radiance Fields (NeRFs), WoundNeRF jointly models wound appearance, geometry, and semantics in a unified 3D space.

- Aggregates automatically generated 2D segmentations into a 3D semantic field

- Enforces multi‑view consistency without requiring dense manual labeling

- Focuses on accurate 3D reconstruction of wound regions rather than hallucination or blending

Results

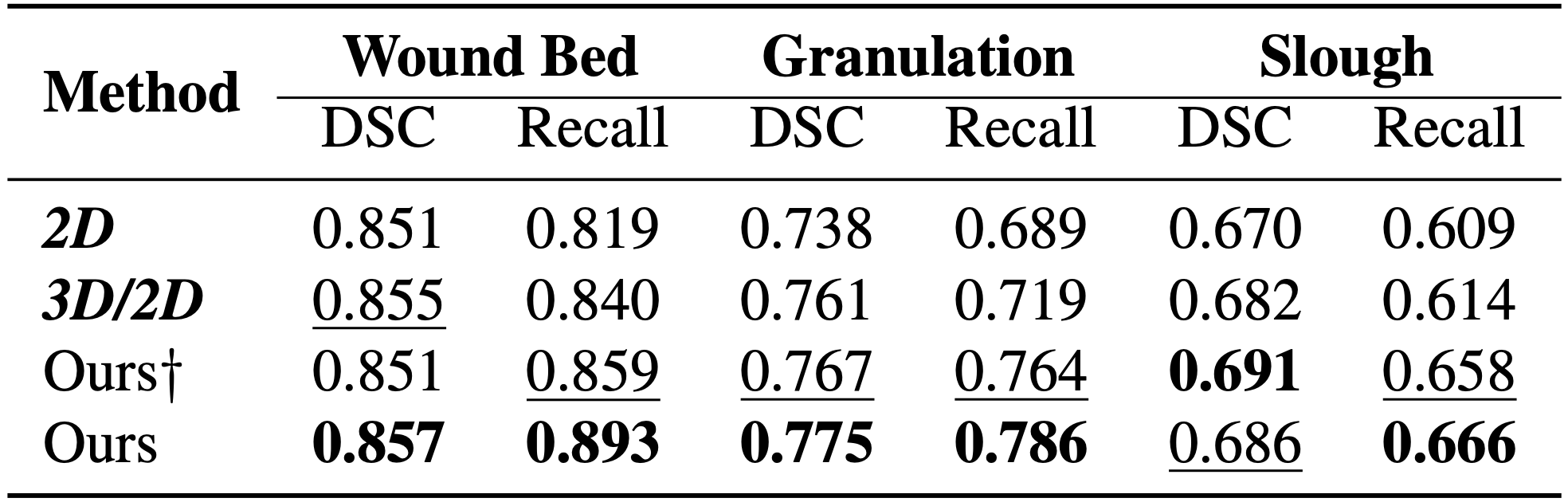

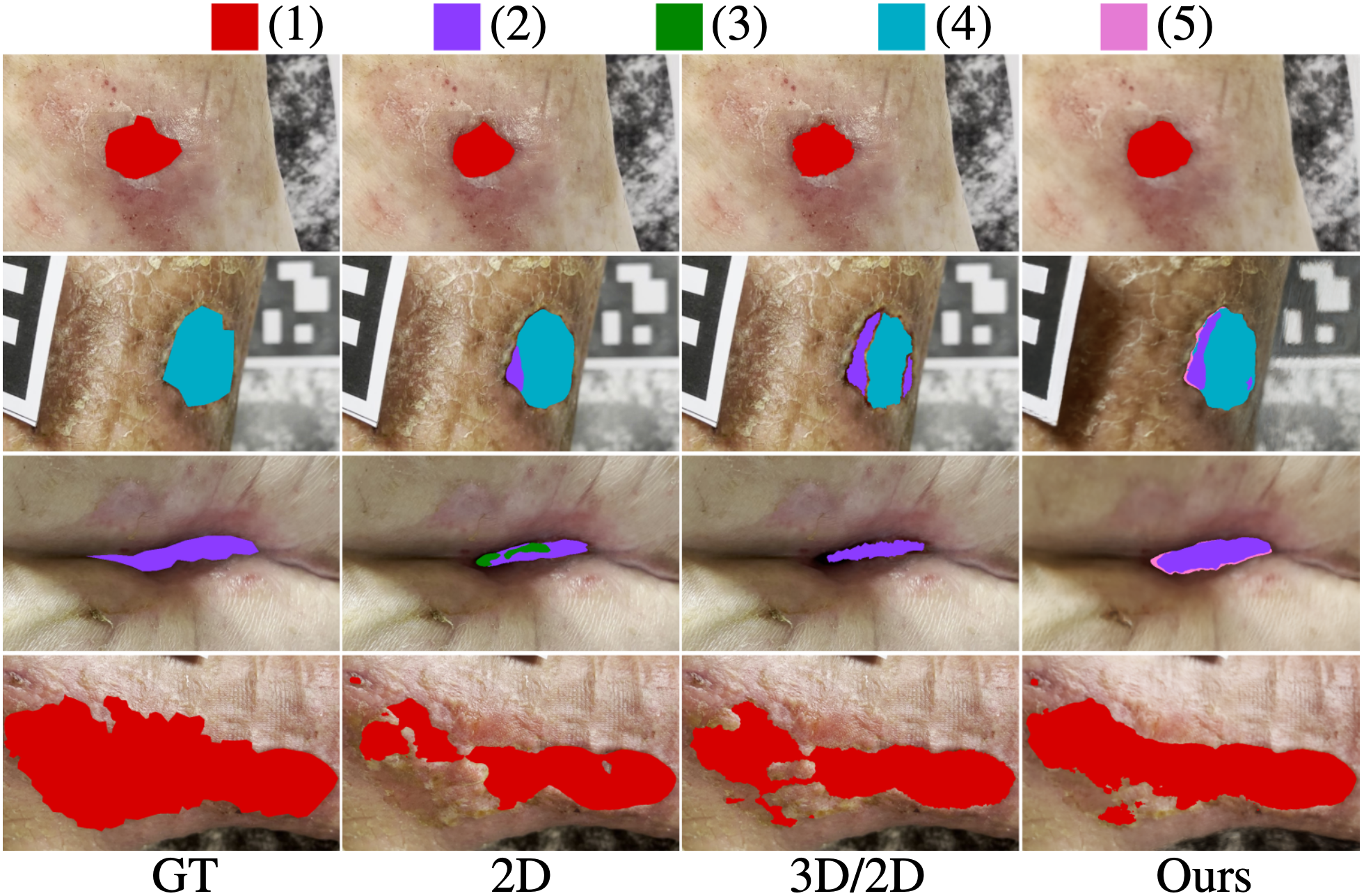

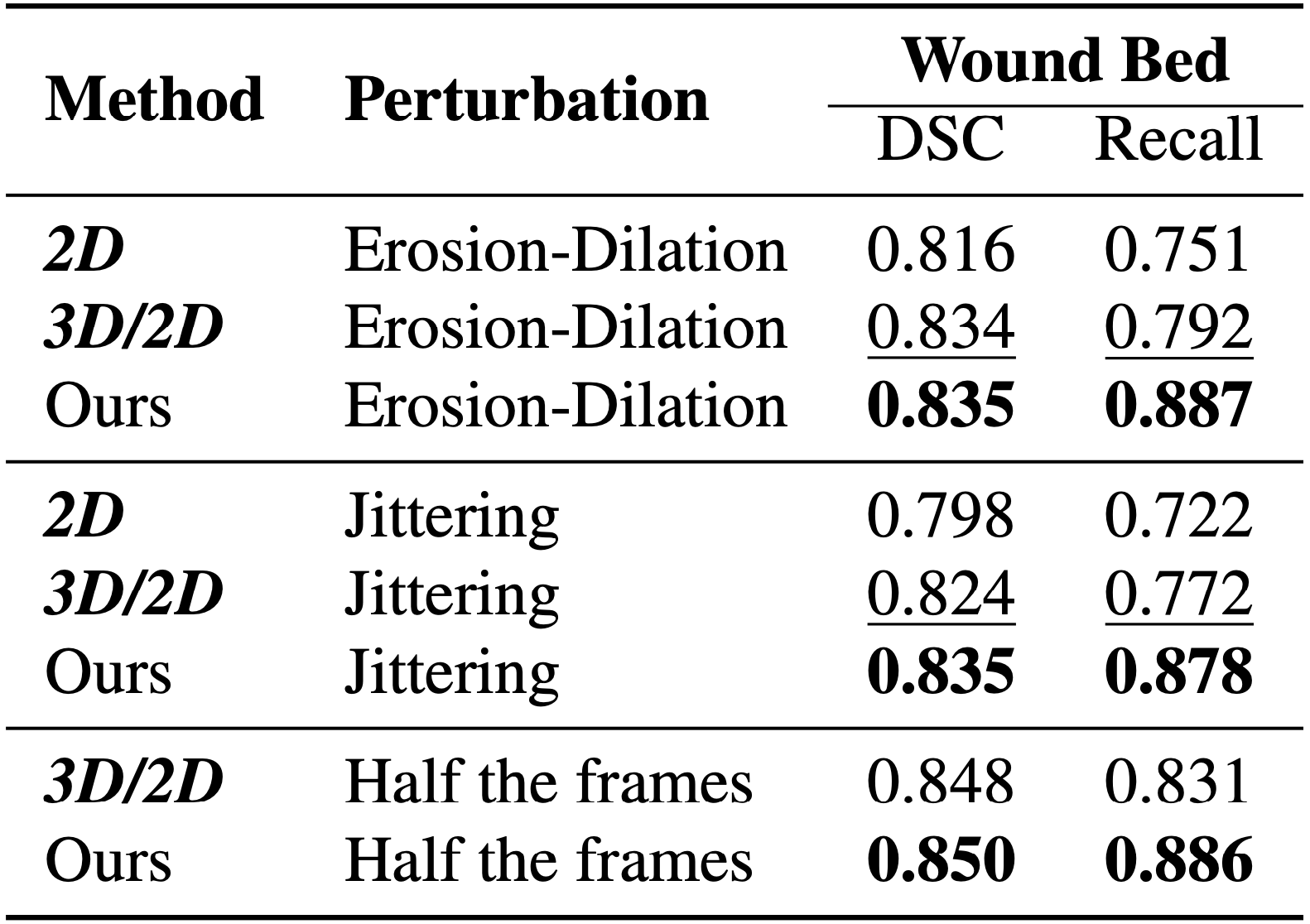

We evaluate WoundNeRF on a real patient dataset collected with clinical collaborators.

Baselines:

- 2D: SegFormer MiT‑B5 trained on single‑view annotations

- 3D/2D: Multi‑view aggregation following Wound3DAssist

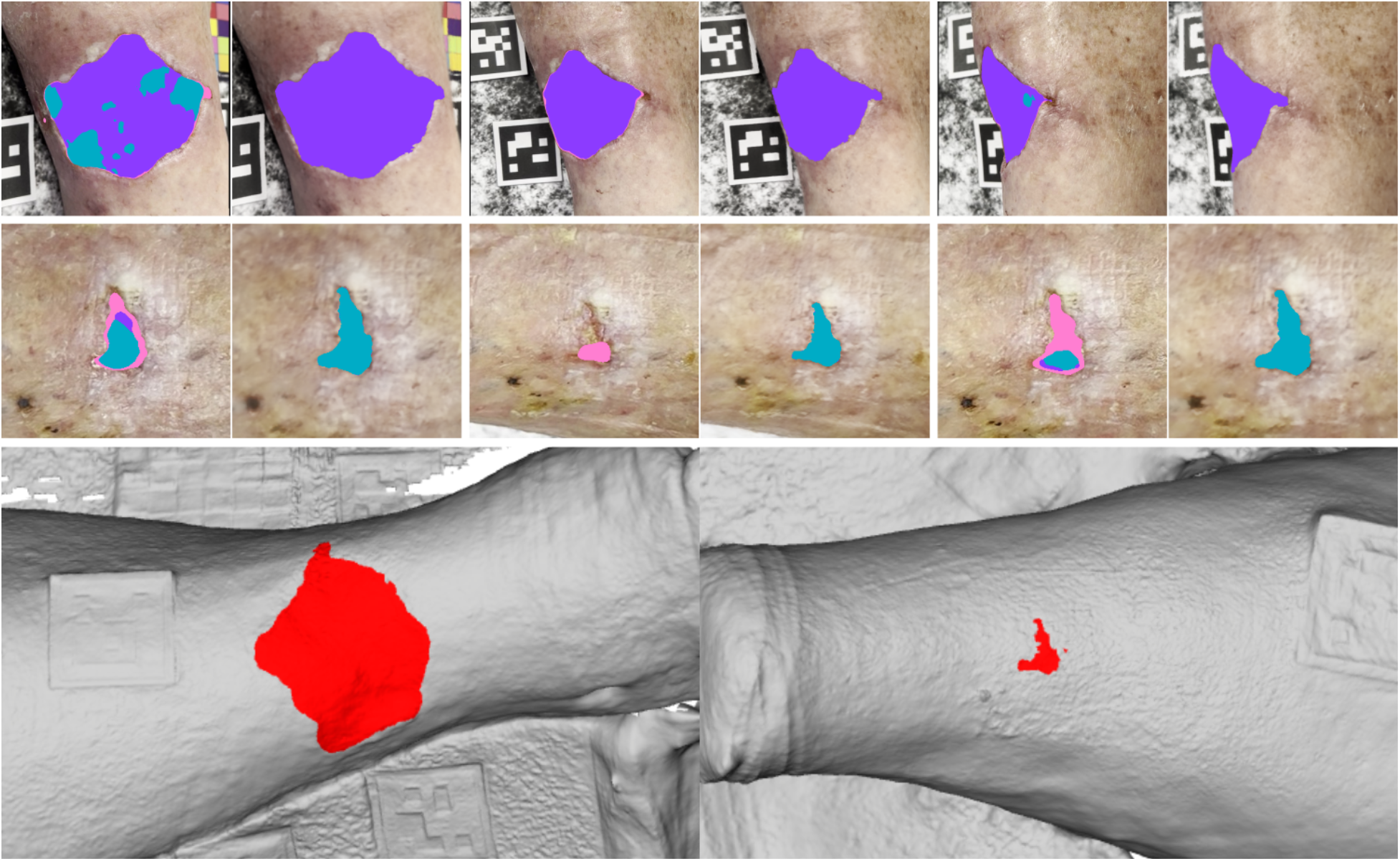

Our model produces smoother boundaries, higher recall, and stronger multi‑view coherence.

Even with limited expert annotations (2–4 views), WoundNeRF maintains consistent predictions across 50 unseen viewpoints, dramatically reducing inter‑view variation and noise.

Robustness

When training masks are intentionally perturbed, WoundNeRF remains stable, highlighting strong resistance to noisy supervision.

Conclusion

We present WoundNeRF, a method for generating multi‑view consistent wound segmentations.

By learning directly in 3D space, it overcomes the topological limitations of 2D segmentation and enables coherent wound reconstruction for clinical use.

Future work will explore confidence‑driven segmentation to reduce misclassified regions and enhance semantic reliability for real‑world healthcare deployment.

If you find this work useful, please cite:

@misc{chierchia2026woundnerf,

title={Multi-View Consistent Wound Segmentation With Neural Fields},

author={Remi Chierchia and Léo Lebrat and David Ahmedt-Aristizabal and Yulia Arzhaeva and Olivier Salvado and Clinton Fookes and Rodrigo Santa Cruz},

year={2026},

eprint={2601.16487},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2601.16487}

}Related Works

If interested, check our previous publications:

-

Syn3DWound

Project Page • Paper (arXiv) • Dataset -

SALVE

Project Page • Paper (IEEE) • Dataset -

Wound3DAssist

Paper (arXiv) -

Non-Invasive 3D Wound Measurement with RGB-D Imaging

Paper (arXiv)

Acknowledgment

This work was supported by the MRFF Rapid Applied Research Translation grant (RARUR000158), CSIRO AI 4 Missions Minimising Antimicrobial Resistance Mission, and Australian Government Training Research Program (AGRTP) Scholarship.